The world of facial recognition is growing incredibly fast. Marketsandmarkets reports recently revealed that back in 2023, the facial recognition industry was worth about $6.3 billion, but it is expected to more than double to $13.4 billion by 2028. What used to seem like something so fictional is now everywhere you look. You can find it at airport gates, inside clothing stores, at hospital doors, and even on the smartphone you carry in your pocket every day.

Even though it is all around us, most businesses don’t know how it works or why it matters. It has become a major part of how our modern world runs without us even noticing. It is important to understand how facial identification works because there are big questions about what happens when the technology makes a mistake.

In the following article, we are going to look at how these systems recognize faces, how they are used in real life, and the tough choices businesses have to make about privacy and fairness as the technology keeps moving forward.

What Is Facial Recognition Technology?

Facial recognition feels like a very futuristic technology, but is already part of your daily life. It is important to understand how it works because it affects your privacy and safety more than you realize.

Core Definition and History

In easy and simple words, facial recognition uses your face from photos, videos, or an eye scan to prove who you are. Scientists first tried this in the 1960s, but it took a long time to get it right. When Apple released Face ID in 2017, the technology moved from high-security areas right into our pockets. Now, this multi-billion-dollar industry is growing faster than ever.

Key Differences from Other Biometrics

Unlike fingerprints or physical scans, facial recognition doesn’t require you to stop or touch anything. This makes it perfect for busy places like stadiums or offices. You can’t stop 50,000 people to take fingerprints, but you can scan their faces as they walk by. Since our faces are always visible in public, the technology is powerful but also starts many big debates.

Main Components of Face Recognition Software

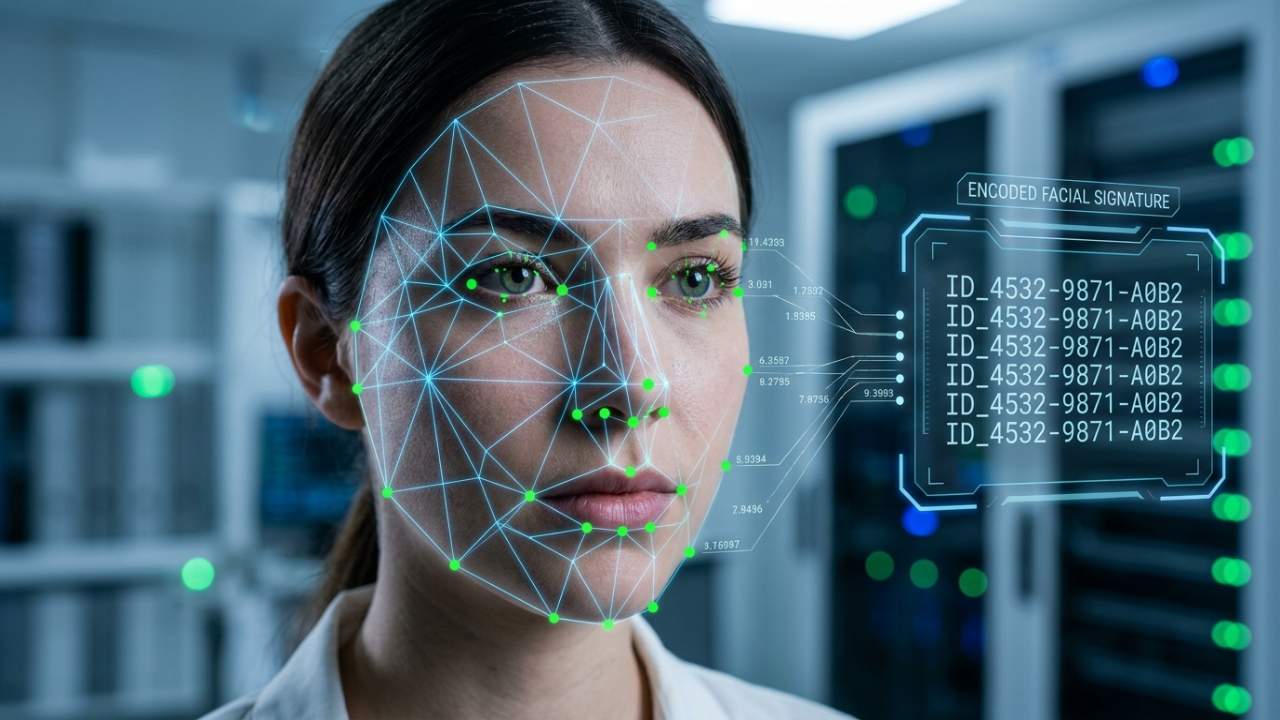

Before you judge these systems, you must know how they work. Success or failure usually depends on three main components that make the whole process run smoothly and accurately. These are:

Detection and Alignment Tools

The first step is finding a face in a busy environment or a blurry picture. The camera looks for the edges of a face and marks important spots, like your eyes, nose, and jaw. This helps the system get a clear, steady image to study. If a company can’t explain how its system does this, it is a sign their technology might not be very good.

Feature Extraction Engines

After finding a face, the system maps out over 80 specific points. It turns these spots into a unique set of numbers, almost like a digital fingerprint for your face. This is done using powerful computers trained on millions of photos. These systems are so fast that they can scan 10,000 faces every second, which is how they check huge crowds instantly.

Matching Algorithms

The system compares your face’s digital numbers against a huge list of saved names or photos. It then gives a “match score” from 0 to 100 to show how sure it is. For a super-secure building, the score might need to be 98 or higher to let someone in. While these systems are almost perfect in a lab, they can still struggle in the real world with bad lighting or blurry cameras.

Step-by-Step: How Face Recognition Software Works

Knowing the components is one thing, but seeing how they work together is what really matters. This “pipeline” is the step-by-step path a face takes from a camera lens to a computer match. Here is the full journey:

Step 1: Capture and Pre-Process

It all starts when a camera, like the one on your phone or a security camera, takes a photo of you. These pictures are usually messy or blurry, so the system has to “clean” them first. It fixes things like bad lighting or motion blur so the computer can see you clearly. A great example is at Heathrow Airport, where gates scan thousands of people every hour. The system constantly adjusts to different lights and angles to make sure it doesn’t make a mistake.

For a deeper look at how smart systems are transforming passenger movement in high-density environments, explore our detailed guide on

Step 2: Analyze and Encode

Once the image is clean and the face is identified, the system picks out special spots on your face, like the edges of your jaw, the corners of your eyes, and the shape of your nose. It turns these spots into a unique code made of numbers.

The system actually saves and compares this code instead of the photo itself. This makes the data small, very precise, and easy for the computer to read. Thanks to better AI and more practice with different types of faces, these systems have become 10 times more accurate since 2020.

Step 3: Compare and Decide

The computer compares your face’s secret code to a huge list of other codes in its database. It then gives a score to show how sure it is about a match. Depending on the score, it might say “yes, that’s them,” sound an alarm, or say it doesn’t recognize the person at all.

This all happens incredibly fast, usually in less than one second! Because speed is so important, companies often test these systems with special tools before they decide to buy them. This helps them make sure the technology actually works well in a real-life setting before they start using it every day.

Real-World Applications and Case Studies

This technology is only useful if it solves real problems. In many busy environments, from schools to stores, it is being used in very helpful ways, but also in some ways that make people worry.

Security and Law Enforcement

Police and law enforcement use face detection technology to find suspects in crowds or check video footage to solve crimes much faster than they used to. While this helps catch criminals, it also raises big questions about freedom.

China is a major example of this. They have over 700 million surveillance cameras, which is about one camera for every two people. Their main system, called “Skynet,” uses AI to pick out faces in a crowd and identify anyone in seconds. Because of concerns about privacy, China recently started new rules in 2025. These rules say that companies must ask for permission before scanning a face and can’t make it the only way to get into a building or pay for something. This shows the constant struggle between using technology to keep people safe and protecting our right to privacy.

Everyday Uses in Retail and on Phones

Stores are using facial recognition to stop shoplifting and make shopping feel more personal. Walmart used this technology to help lower theft by 20%. Most of us are so used to using Face ID on our phones that we don’t even think twice when we see it in a store.

During busy times like Black Friday, this technology is a lifesaver for managing crowds. According to a guide by Qwaiting, stores use smart queue systems to track how many people are in line and how fast they are moving. This helps managers move staff to busy areas right away. Instead of getting stuck in a huge, messy rush, shoppers have a smoother experience, which keeps them happy and coming back.

Retailers looking to reduce friction at peak hours can explore how modern queue strategies are reshaping in-store experiences.

Emerging Roles in Health and Events

Hospitals and big event centers are also finding great ways to use this technology. After the pandemic, many places started using cameras that could scan your face and check your temperature at the same time to see if you had a fever. By 2026, about 80% of large sports stadiums are expected to use facial identification to let fans into the game.

If a company wants to start using this technology, experts say they should start small. Instead of putting it everywhere at once, they might test it at just one door or in one small office first. This “test run” helps them see how well it actually works in real life before they spend a lot of money to put it in every building.

In medical environments, such as large hospitals or clinics, safety should never be an afterthought. Hospitals are now using facial recognition technology to manage the patient flow across departments effectively. If you also want to know in detail how hospitals are achieving it:

How Hospitals Use Face Recognition to Improve Patient Trust & Safety

Challenges, Ethics, and Fixes

This is where the conversation gets a bit tougher. If a leader is deciding whether to use this technology, they have to look at the problems these solutions can give honestly. They can’t just ignore the risks or get defensive when people ask hard questions. Some of the major concerns include:

Accuracy and Bias Issues

This is one of the most serious problems with the technology. It doesn’t always work the same for everyone. Studies even revealed that the systems were much more likely to make mistakes up to 34% more when looking at people with darker skin or at women compared to men with lighter skin. This isn’t just a small computer glitch; it has caused real-world trouble.

In 2020, for example, police in Detroit arrested innocent people because the computer wrongly identified their faces. Most experts say we don’t have to throw the technology away, but we must make it better. We need to train the computers using photos of all kinds of people. We also need outside experts to check the systems for fairness and always make sure a real person double-checks the computer’s work before anyone gets in trouble.

Privacy Risks and Regulations

One of the biggest debates today is about “passive identification.” This is when a camera scans your face without you ever knowing or giving permission. It’s a huge deal because it can feel like you’re being followed even when you’re just walking down the street.

Governments are starting to take this very seriously. In Europe, rules are so strict that companies have already been fined over $1 billion for mishandling facial data. By 2026, the new “EU AI Act” will have made it even harder to use this technology in ways that could be unfair or invasive.

Future Improvements

New AI chips are making facial identification much faster and more accurate in the real world, not just in a lab. Instead of sending your data to a far-away “cloud” server, many systems now use edge processing, which means they handle everything right on the device (like a smart camera or a robot). This keeps your data safer and makes the system work almost instantly.

In 2026, the technology has reached a point where it’s being used for everything from “Facey” robots to digital check-ins. However, because it’s so powerful, experts have one main piece of advice: don’t just trust a company’s sales pitch.

Conclusion

Facial recognition operates through a precise sequence of detection, alignment, feature extraction, encoding, and matching, where each stage depends entirely on the quality of the one before it. When this pipeline is well-designed and responsibly deployed, the operational value is substantial, but a poorly managed system can lead to measurable and sometimes irreversible consequences.

As the technology continues to grow at a rate of roughly 25% annually through 2030, the primary challenge for leaders is no longer whether to use these systems, but how to engage with them intelligently and ethically.

If you are currently evaluating these systems, the most effective first step is to run a live demo within your actual environment to see how the software handles your specific lighting and crowd density. It is essential to ask vendors hard questions regarding their third-party bias audits and their specific protocols for data handling and retention. Because the regulatory landscape is moving just as fast as the technology itself, staying informed on new compliance laws is the only way to ensure a deployment remains a long-term asset rather than a legal liability.

Now is the time to take initiatives and install a facial recognition software into your business with Qwaiting’s expert advice. Book your 2-week free trial today.